EE595 Mobile Computing, Sensing, Learning, and Interactions

Previous Projects from CS442: Mobile Computing, Networking & Applications

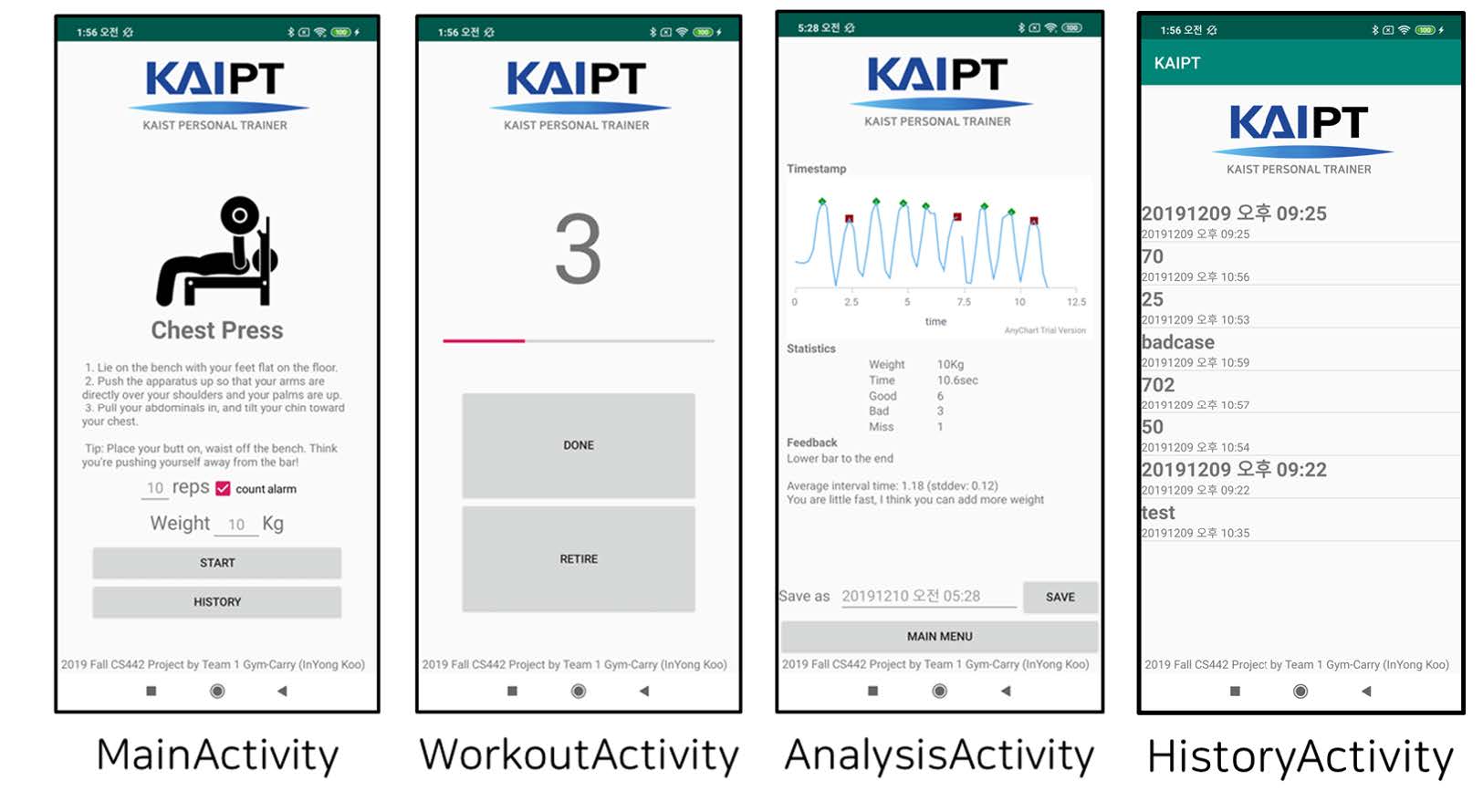

KaiPT: KAIST Personal Trainer

In Yong Koo

Fall 2019

Maintaining health requires continual effort and care. However, students and researchers in KAIST have difficulties staying fit. Hence I introduce KAIPT, a workout support application with exercise evaluation functionality easily utilizable at gyms in KAIST. It associates with the weight training

machine and additional equipment to monitor exercise performance. It employs light and proximity sensors, and signal processing upon sensor data suggests insightful knowledge about the performance, such as the relative posture to the range of motion and the interval between repetitions.

With this understanding, KAIPT diagnoses the posture and the pace and provides elementary pieces of advice. The robustness and effectiveness were tested, but further evaluation is required in future works.

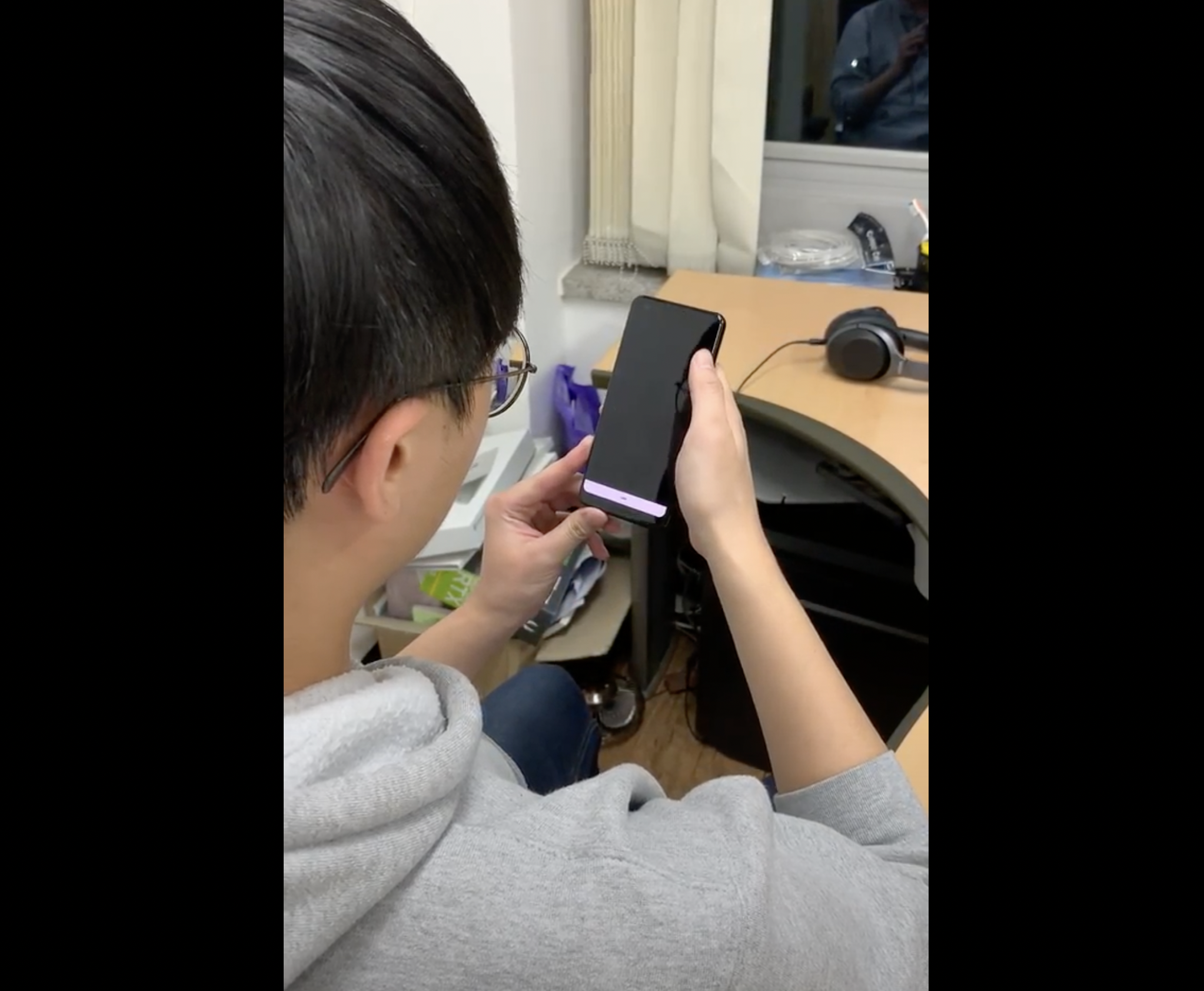

EyeScream: Don't let your eyes be close to smartphone screen

Sungbin Jo and Jaejun Park

Fall 2019

People spend a substantial amount of time looking at their smartphone screens. From waking up in the morning to falling asleep, smartphones are always with users. Eyes are sensitive and easily get tired. Looking at the smartphone

close to eyes causes eye strain and even reduces the quality of sleep. However, people are usually unconscious of whether they are looking at the devices at a close distance. For example, people use smartphones right in front of their

face in bed.

Although users know that short viewing distance is unhealthy to their eyes, they still keep a short distance between their eyes and the devices. It is annoying to measure the distance continuously. Moreover, determining whether the

distance is close or not requires lots of effort. Also, it is hard to seriously feel the negative effects from short viewing distance since they do not occur instantly. Usually, eye strain appears after a certain period of use. When we touch a hot

object we directly notice and take the hand off from the object. Similarly, if the users notice directly when they use devices close enough then users would increase the distance.

In this paper, we propose an eye-care system named Eye-Scream. This system gets rid of users burden to measure the distance and help users keep the proper distance. In order to reduce the mental load of a smartphone user, EyeScream

monitors the distance continuously. Additionally, it simulates the negative effect instantly by lowering the brightness. We expect that it recalls users the negative effect from short viewing distance.

Cupeer: An Offline Socializing Application Using Mobile Peer-to-Peer Connectivity

Jaeseok Huh and Adrian Steffan

Fall 2019

In the digital era, over three billion people use social network services (SNS) to cultivate relationships with others every day. However, most services have been criticized for ignoring the wellbeing

of users while maximizing the profits within the platform. In this paper, we propose Cupeer, an offline socializing application that uses peer-to-peer (P2P) connectivity and a decentralized, user-driven matching algorithm. We discuss its

design choices and prototype implementation and the result for our user survey. Our evaluation shows a high level of user satisfaction with Cupeer and a promising future for other directions including online dating.

We believe that users' growing awareness of privacy will give rise to services like Cupeer. We made source code available at https://github.com/iriszero/Cupeer.

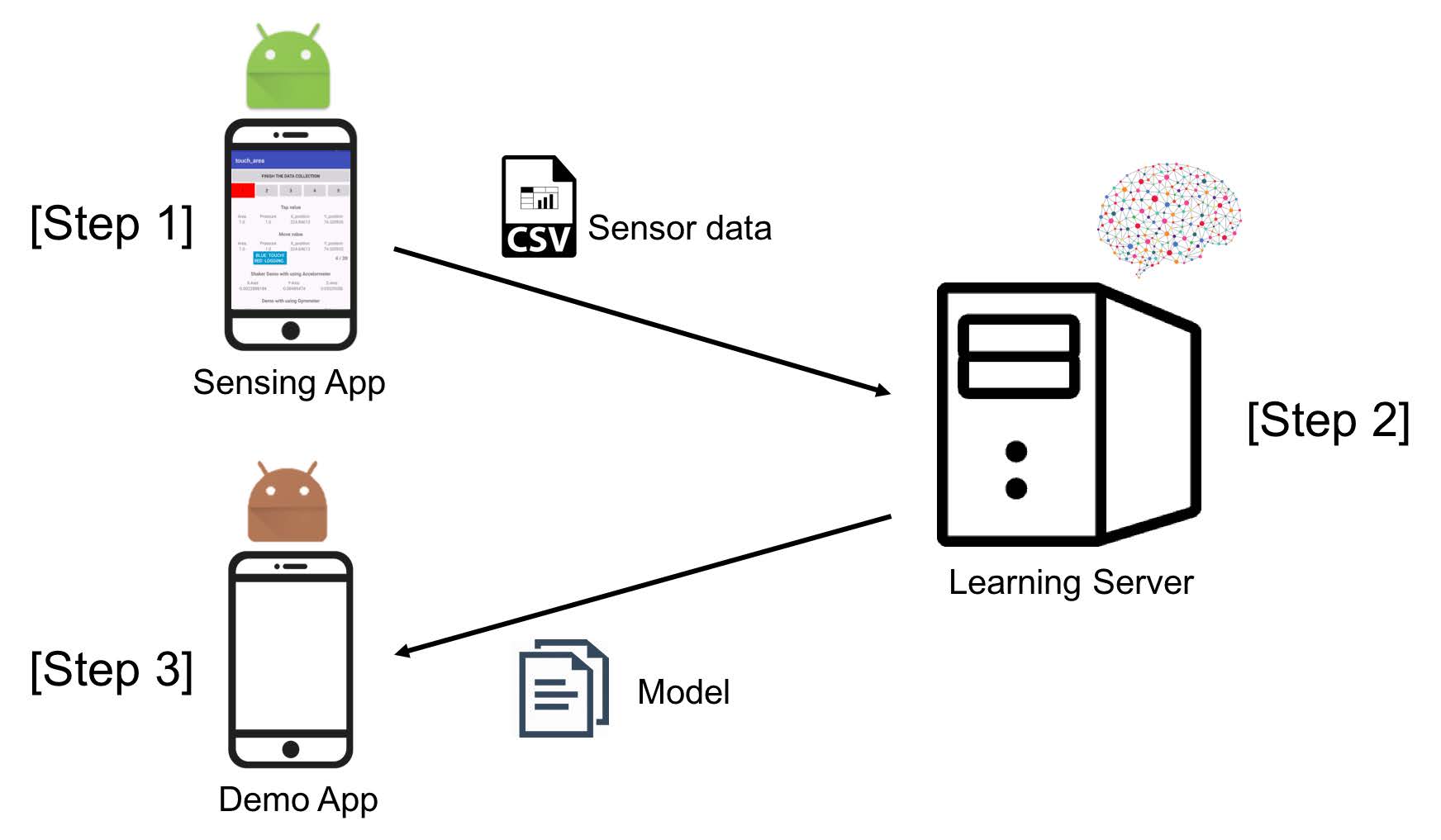

Detective Echo: Object classification system using acoustic signal only

Ryuhaerang Choi, Cheolho Jeon, and Sooyeong Lee

Fall 2019

Recently, as Deep Learning (DL) technologies are growing, the applications of DL such as object classification, speech recognition, natural language processing also are developing further. Specifically, in object classification field, the accuracy of classifying object

is almost up to 99% using computer vision. However, only using computer vision, there are some limitations. In this research, we propose our own acoustic-signal based object classification techniques. We used smartphone and its sensor to classify arbitrary objects. The results demonstrate our proposed approach is feasible

and broaden the possibility of application of acoustic signal on the previous computer vision only approaches.

LiveSync: Acoustic-based Live Video Synchronization

Taeckyung Lee and Junhyeok Choi

Fall 2019

With emerging interest on video-sharing platforms, live video streaming has been popular through platforms such as YouTube or Twitch. In the live video streaming context, multiple users can watch same video simultaneously with

their own mobile devices.

However, due to the wireless network latency and diverse device characteristics, individuals' video timelines may slightly differ from each other to cause uncomfortable overlapping of sounds or video spoilers.

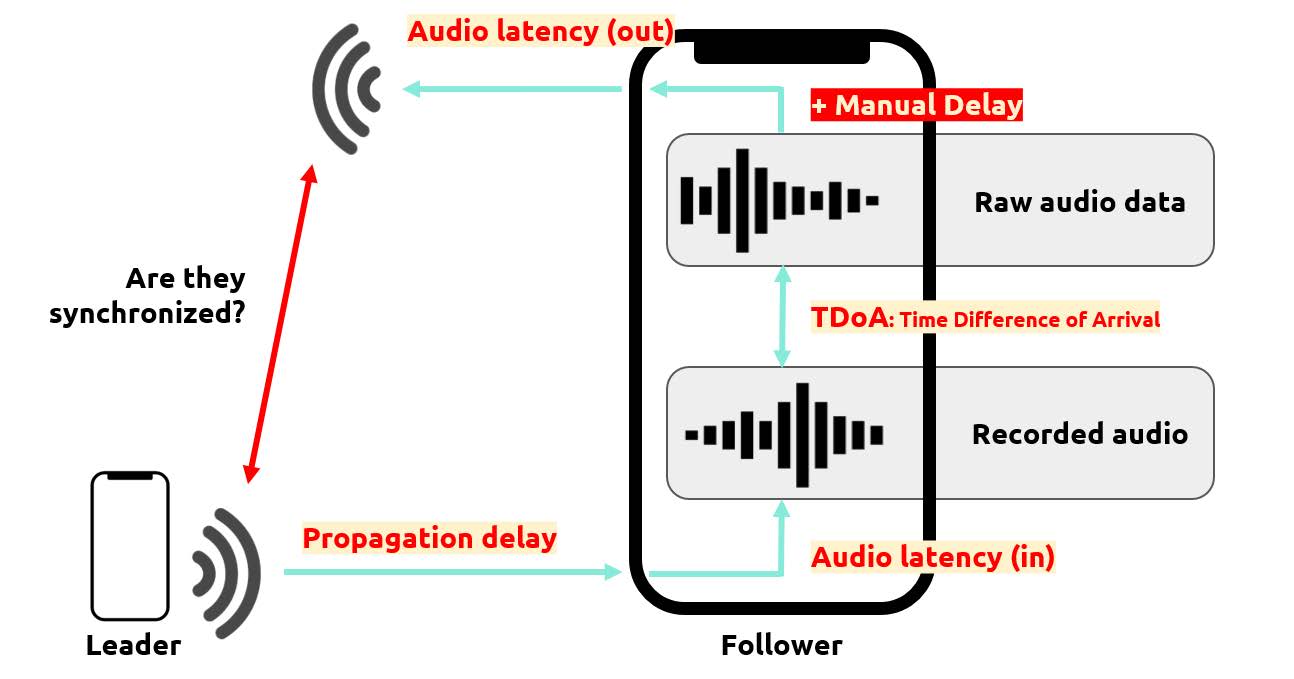

We present LiveSync, an automatic acoustic-based video synchronization tool that supports multiple users. LiveSync performs audio latency measurement, TDoA (Time Difference of Arrival) measurement, and

propagation delay estimation to calculate manual delay to synchronize the audio output with existing sounds.

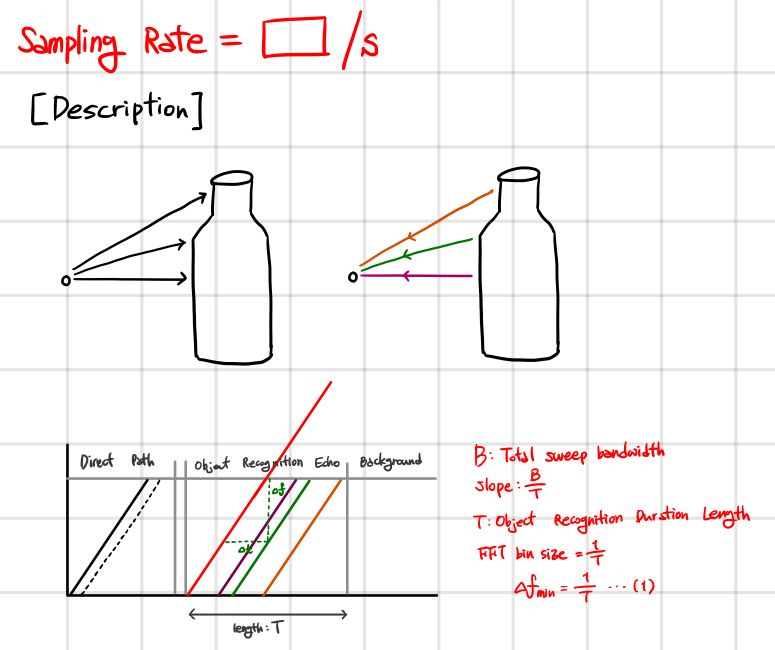

GraspTraker: Tracking smartphone grab posture with inaudible sound

Seungjoo Lee and HyungJun Yoon

Fall 2019

This work presents an application called GraspTracker that tracks the user's hand posture using built-in sensors. The key idea is to use the differences in amplitude change at each frequency in the sound propagated through the smartphone's device, depending on the gripping hand posture. We designed the GraspTracker to eliminate the effect of reflected sound using mobile system for the hand posture classification. The GraspTracker outputs FMCW sounds using inaudible sounds and uses the smartphone's camcorder microphone and primary microphone together to record sound. The GraspTracker is trained to minimize the effect of the reflected sound through the machine learning classifier from the FFT results and to classify the current gripping hand posture.

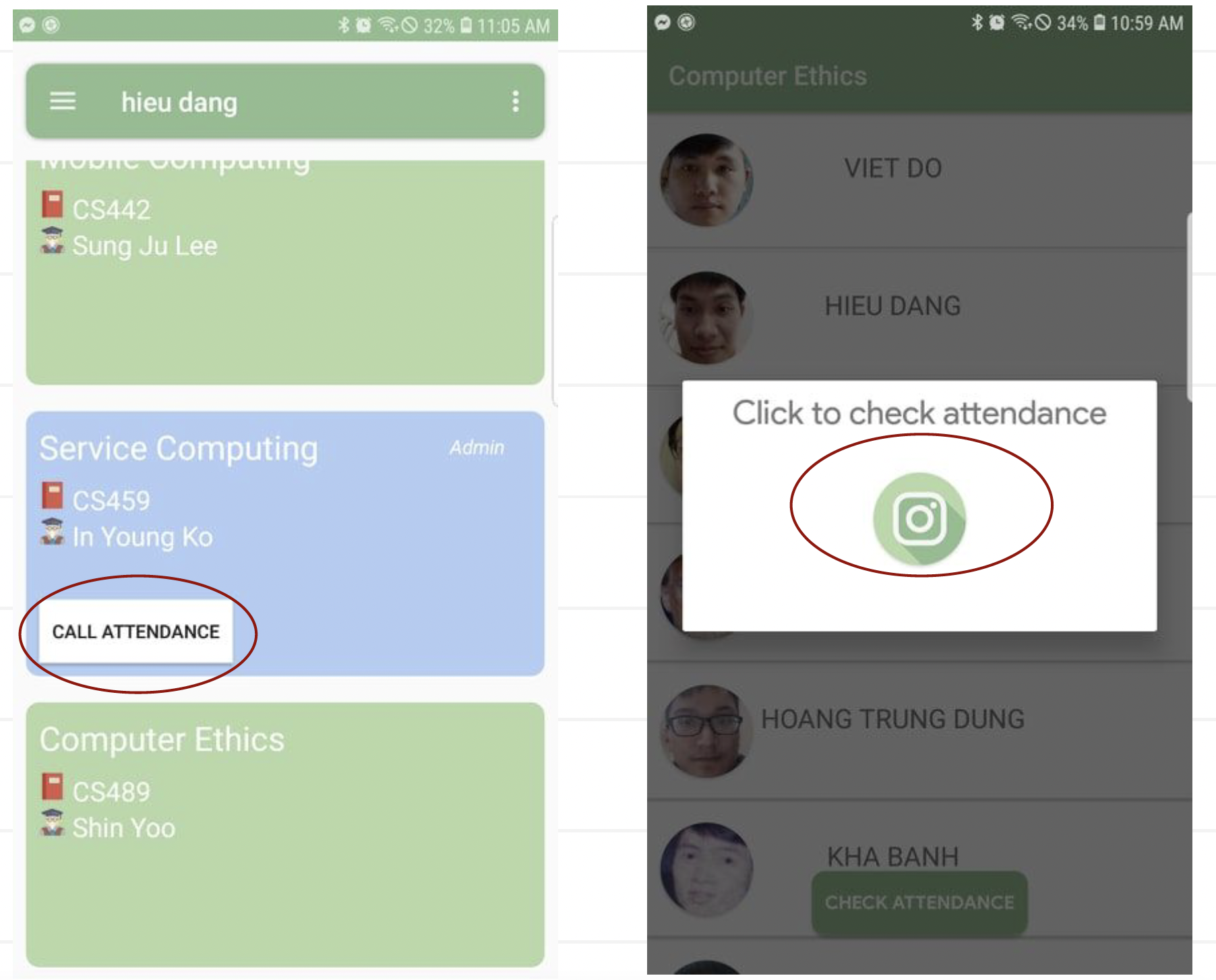

KATIE: KAIST Attendance Tracker with Identification Examination

Viet Do, Viet Anh Le, and Hieu Dang

Fall 2019

In some schools or universities, checking attendance is mandatory and treated very seriously. However, classic methods are all repetitive and time-consuming at a large scale. Since smartphones are already very

common, the task could have been executed better with the help of mobile technology. In this paper, we propose KATIE (KAIST Attendance Tracker with Identification Examination), an alternative approach to

the attendance checking problem, where we leverage the mobile phone's Bluetooth signal to detect nearby devices, and apply face recognition with a lightweight machine learning model that is mobile-friendly in

order to verify the student's identity. Theoretically, the solution we proposed here would be sufficient to eliminate cheating problems and also automate the entire attendance checking pipeline, thus solving the

issue on a larger scale.

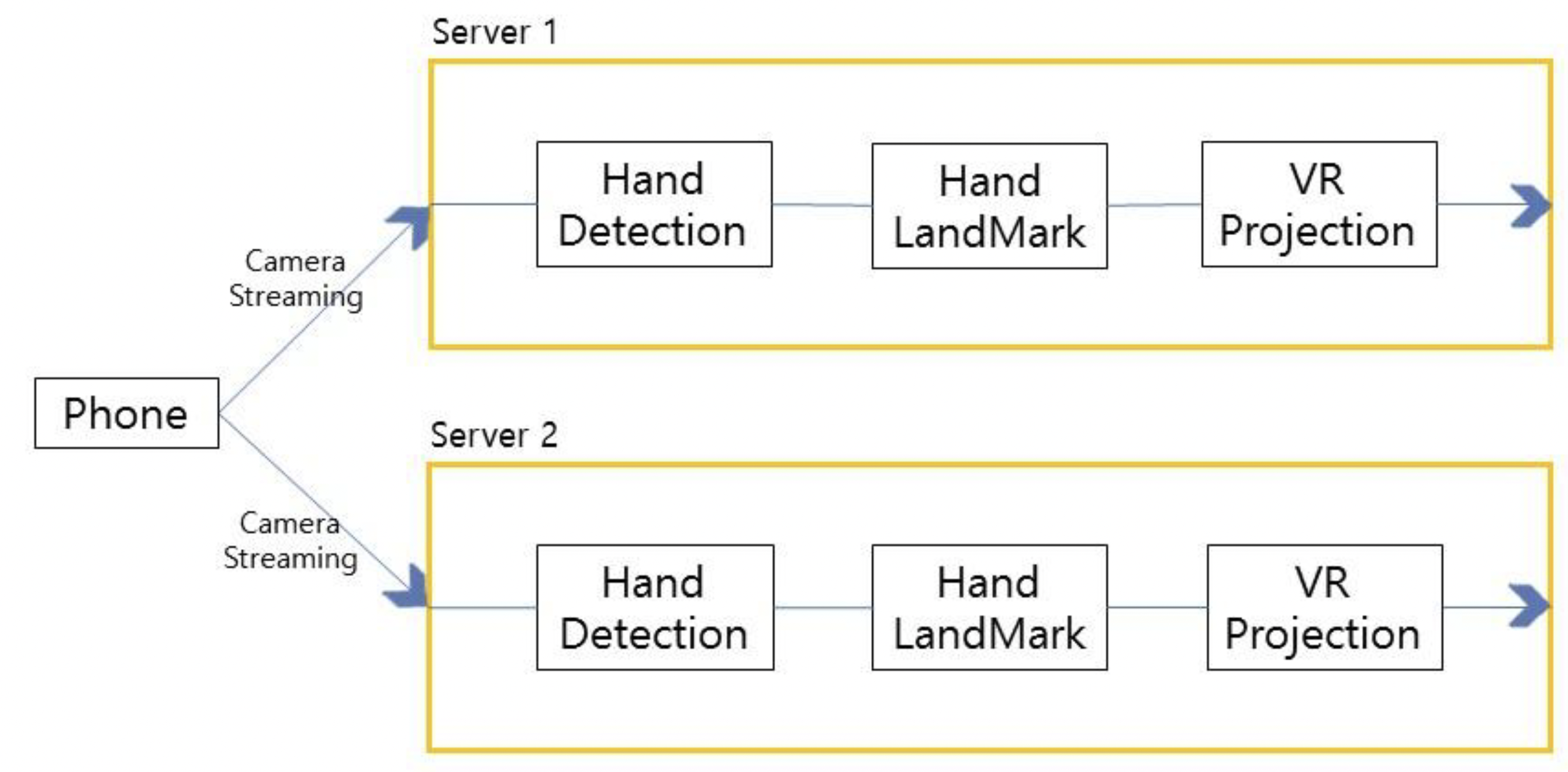

Project VCR - Repurposing the Mobile Phone

Asim Fauzi and Daehyeon Nam

Fall 2019

Most VR applications nowadays require some sort of remote controller device paired with an expensive headset. Those applications relying on mobile devices are usually limited to a simple visual experience with no controls. This research

targets this problem by enabling controllability without external devices besides a head-mount and the mobile device itself. We provide an innovative solution of capturing handlandmark data after several stages of processing and use

this data to render hand information in the virtual environment. The hand-landmarking is computed using a hand-pose model on streamed images from the mobile device itself. To tackle the issues of thermal throttling as well as mobile CPU

limitations, our main innovation comes from off-loading as much of the workload as possible on designated servers with an image partitioning algorithm. By using a simple server request on future VR applications, hand data can be sent

back to the client to mimic real-life hand controllability. Our results proved satisfying with variable sub-second latency considering the whole roundtrip time from client to server to client. In conclusion, we believe this work could pave way

for more use cases in mobile-offloading - specifically, for heavy virtual reality computing.

EyeSafe: Towards a lightweight, low cost ocular diagnosis system

Gu Hong Min and Jae Jun Lee

Fall 2019

Currently, there exists a problem in the difficulty of access to such medical resources, which greatly undermines the ability to diagnose diseases that require early detection.

To solve this problem, this paper proposes the concept of EyeSafe, a lightweight, low cost ocular diagnosis system based on embedded systems and sensors such that diagnosis can be performed

without the aid of doctors or hospitals. Although due to technical and time constraints and lack of manpower, EyeSafe could not be implemented, this paper discusses about the four challenges

(difficulty of use of smartphone sensors, establishment of ground truth, weak penetration of acoustic sound the coverage of the course project), that EyeSafe faced, why such challenges impeded

the development, and potential solutions for the challenges such that future researchers could take note.

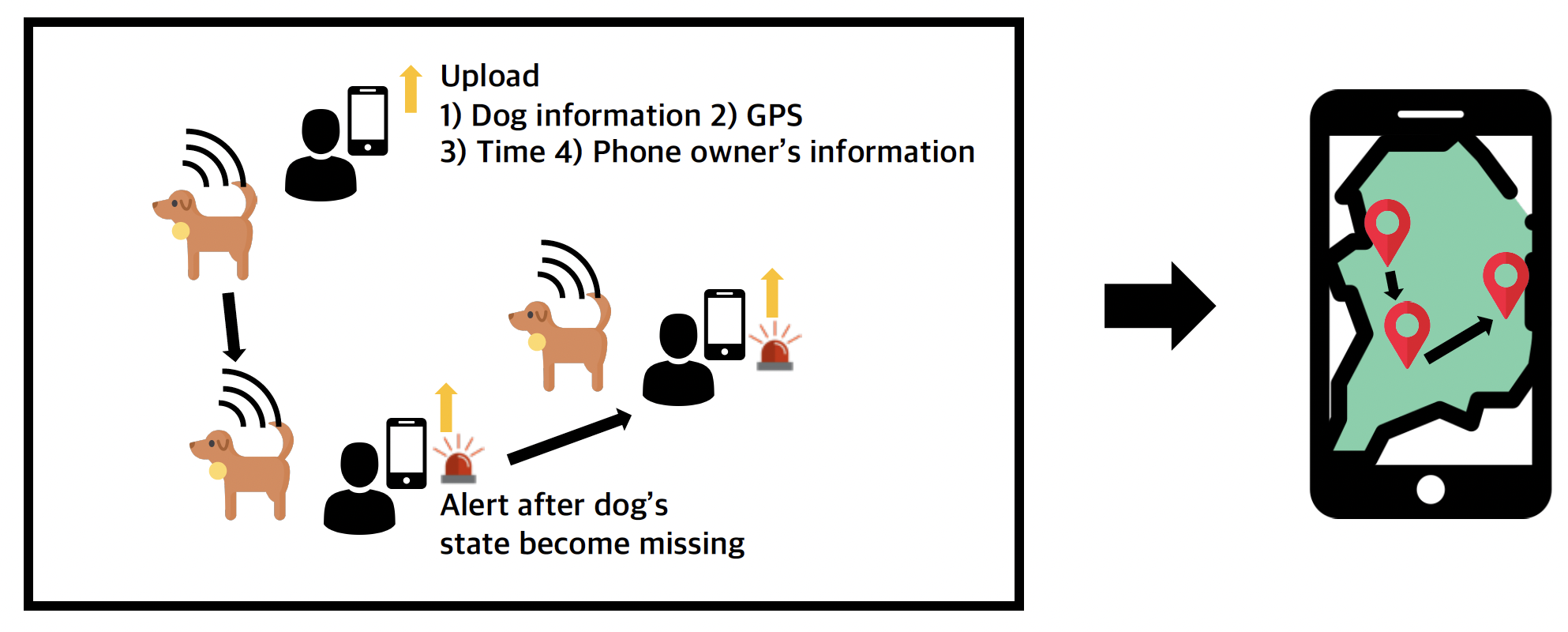

DoggyBuddy: Find your dog, find some help

Yeonsu Kim

Fall 2019

Nowadays, various finding system for missing dog appears, due to the commercialization of sensors and crowdsourcing. Two approaches exist for finding a missing dog: 1) track dogs' location using GPS tracker and 2) get help from the crowd

by posting. However, existing GPS dog location tracker has short battery life and existing crowd help system is not easy to give and get actual help. To mitigate these issues, we propose DoggyBuddy, a crowdsource based sensing system

using Bluetooth BLE beacon. DoggyBuddy can support lowpower dog location tracking and crowds' easier help. DoggyBuddy provides an approximate location of the dog by crowdsourcing and support crowds' easier help by the automatic

alert of a missing dog using Bluetooth BLE beacon. Interview with dog owners shows small, light, and low-power location tracking DoggyBuddy is appropriate for daily insurance compares to existing GPS trackers. Also, interviewers

answered automatic alerts of near missing dogs lower the burden of giving help.

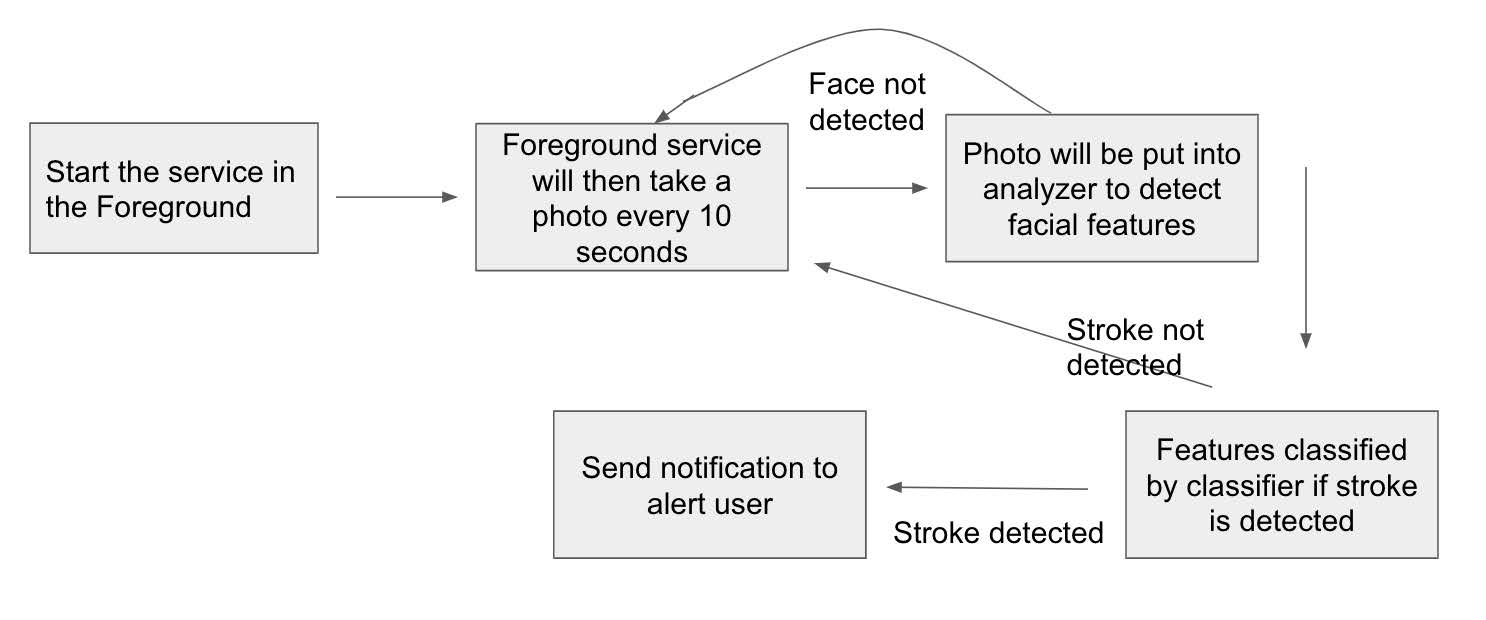

Mobile Application for Passive Stroke Detection

Aimas Lund and Joshua Kim

Fall 2019

In the United States, someone is having a stroke every 40 seconds. Every four minutes, someone dies of stroke. As of 2017, 140,000 Americans die from strokes annually, resulting in the third leading cause of death in the US. While

applications have been created for detecting stroke, it deals with a limitation of only being able to confirm that a stroke has occurred. Dealing with the problem of detection in the moment and timely medical assistance, we explore the possibility of a

passive stroke detector application. In order to develop this detector, we researched detectable facial features that commonly occur during a stroke: the left eye, right eye, upper lip area, and the lower lip area. Then, by extracting

these features using Google's Firebase Machine Learning kit, we were then able to develop a detector of stroke by feeding a WEKA based naive Bayes classifier with the extracted features. As a result, the passive application will take one photo in any specified

time-interval and determine if the user is having a stroke.

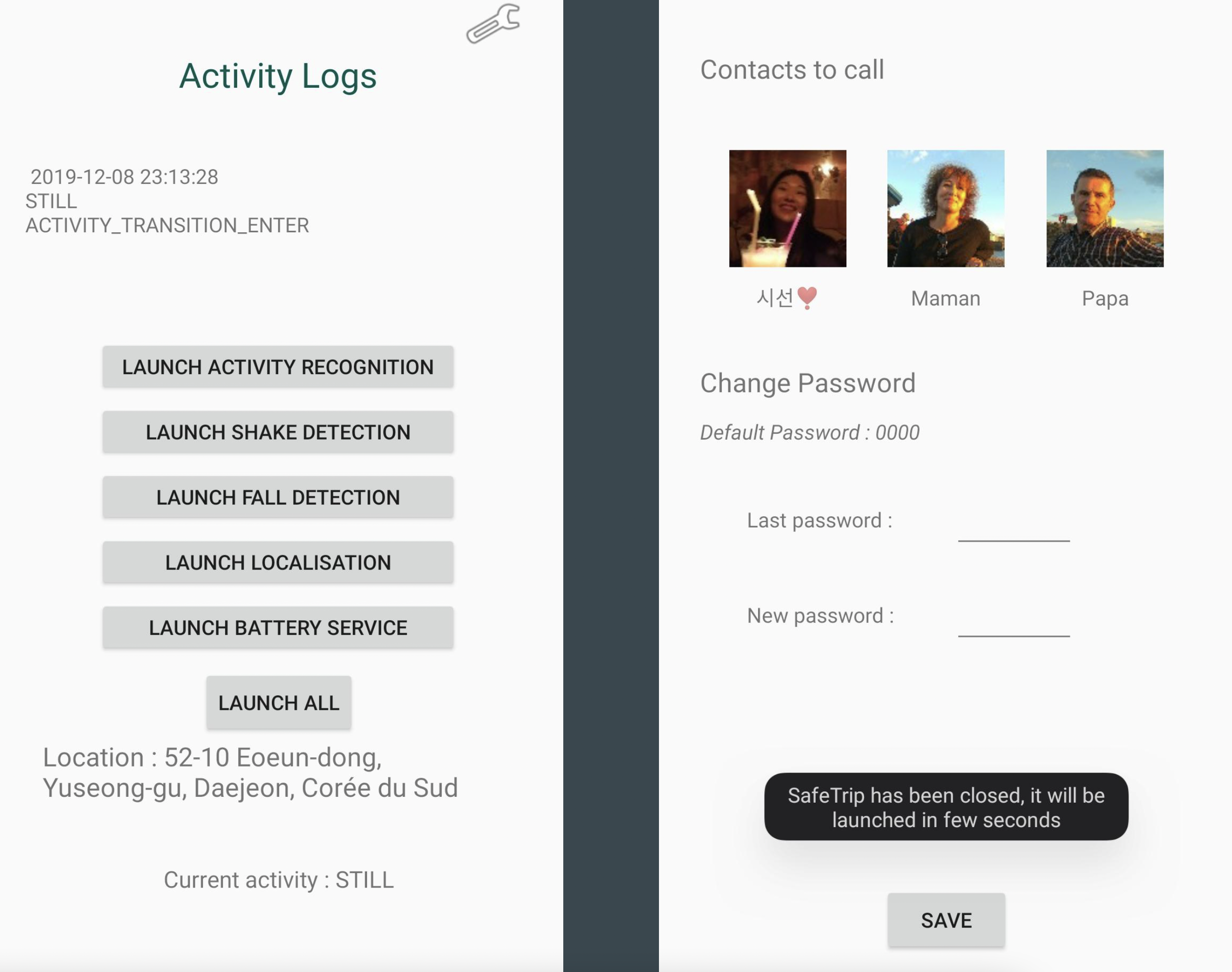

Safe Trip: An Application to Get Home Safely

Martin Hallgren and Quentin Piot

Fall 2019

In this project we have developed an application that automatically detects when the user is being assaulted. It does so by extracting features from the acceleromter and gyroscope, then those features are fed to a WEKA classifier that classifies

each such instance as either other, fall or run, while Googles activity transition API keeps track on if the user is in a vehicle or not. If the user is running, has fallen down or is in a vehicle, the application gives of a loud sound and sends

a text message to the user's chosen contacts. If the the user has internet access, the text message also contains the user's current address. The application also sends a notice to all other users of the application within a one kilometer radius

with the attacked user's position on a map, if internet is accessible. The tests conducted shows that the classifier shows promise and achieves an F1-score of 94.7%.

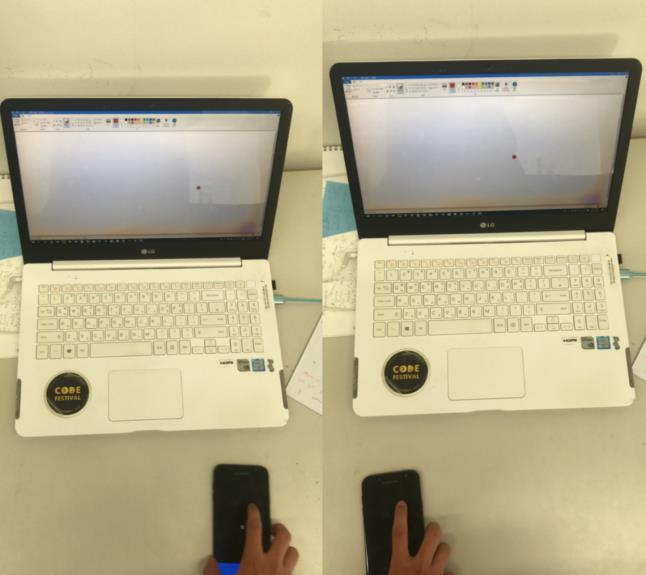

Smart Mouse: identifying the possibility of smartphone mouse on different approaches

Jaemin Shin and Dongjun Youn

Spring 2018

In this work, we demonstrate an idea of leveraging smartphone as a mouse, which aims to support reliable, highly accurate mouse in mobile scenarios. We explore two different approaches; the front camera based approach

and the inaudible sound based approach. In both approaches, we identify challenges involved with the approach, then suggests novel solution to each challenges. To achieve smartphone mouse with better performance,

we propose a future work on both approaches.

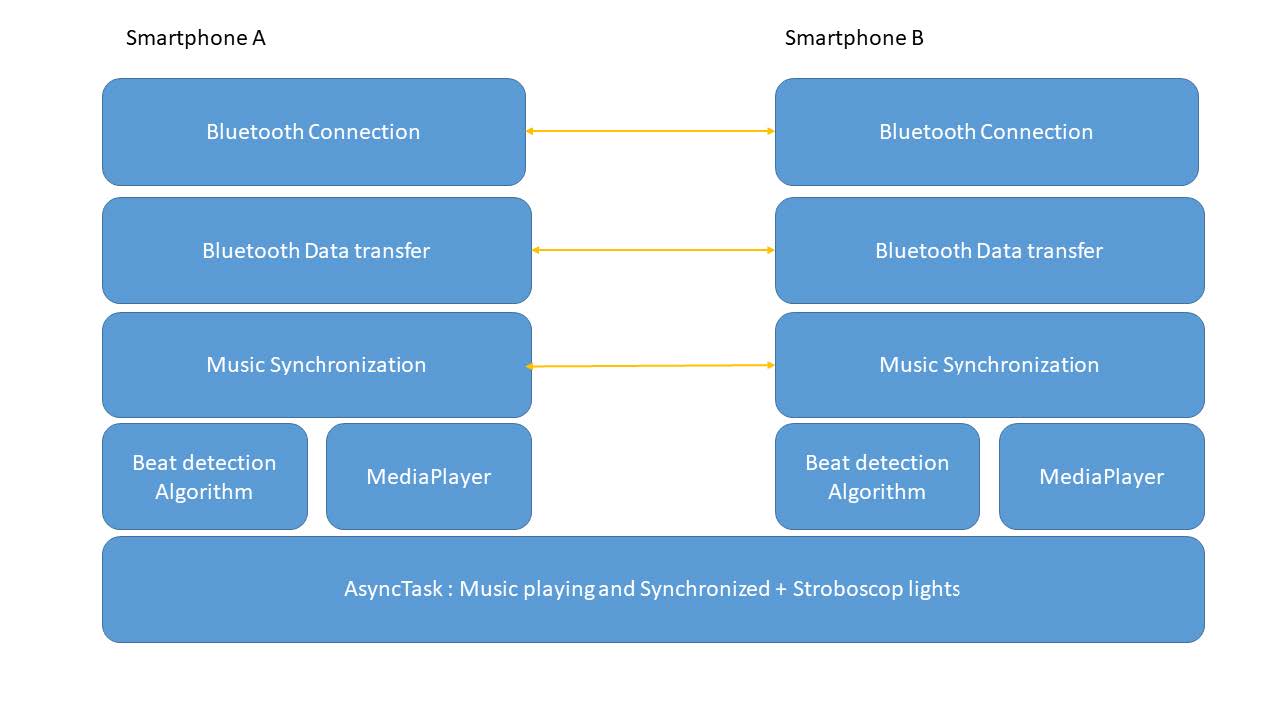

SALTApp : Sound And LighT Application Speakers and stobe light with multiple smartphones

Alexandre Allani and Faycal Baki

Spring 2018

Today, it is possible to have really good sound displayed on bluetooth speaker. However there are a lot of situation where one doesn't actually have a bluetooth speaker on him.

Moreover, those devices need to be charged and are for some of them quite cumbersome. Moreover, inside a house, where bluetooth speakers might be usable, it is quite difficult

to have a nightclub atmosphere, since strobe lights are quite expensive and inadapted to homes In this paper, we're going to discuss about a system that

uses two (or more) smartphone to create a general speaker, by synchronizing the speakers of each smartphone, and a strobe light, using torch light of each smartphones. First we

developped an application that switched on and off torch light of one smartphone according to the beat of the music, and then we developped an application that shares a music between two

smartphones, plays the track and turn the torch light into a strobe light.

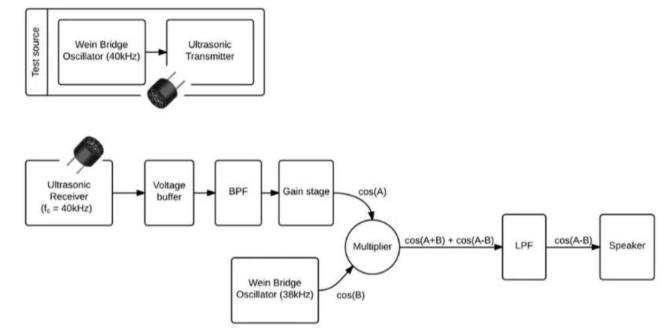

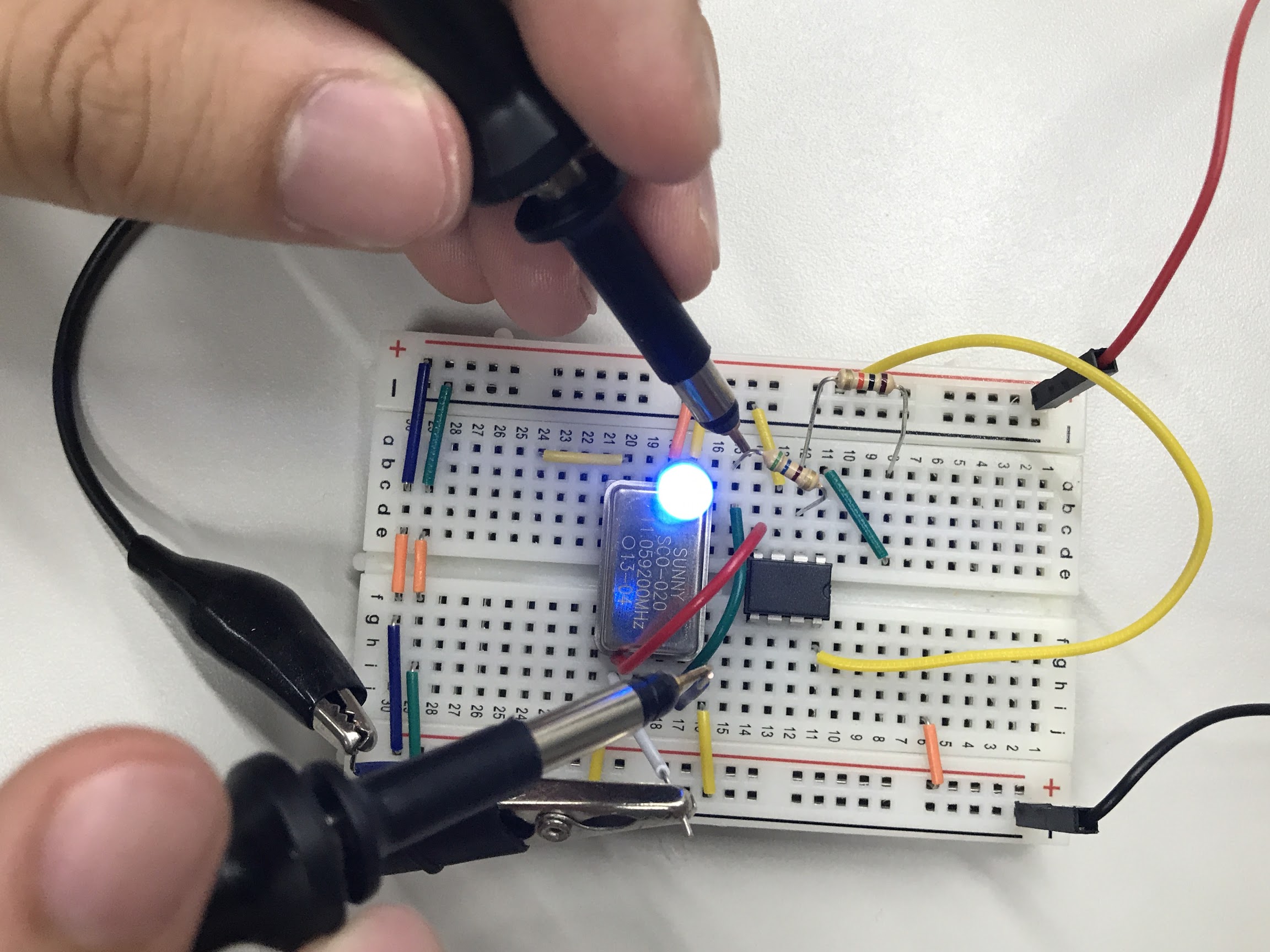

Body Coupled Communication Using Commodity Devices

Soo Young Park, Jun Hyuk Chang, and Wan Ju Kang

Spring 2017

Traditional wireless communication schemes have always suffered the threat of man-in-the-middle (MITM) attacks. Malicious entities can attempt to eavesdrop, jam, or spoof electromagnetic signals traversing mid-air, and security

research therefore takes up a significant portion of todays wireless communications. Here, we present a body-coupled communications (BCC) scheme utilizing commodity devices. The BCC transmitter takes the human body as an electrical conductor

and transmits electrical signals through it. The BCC receiver senses the BCC signal, filters bodily noises, and amplifies the meaningful component of the signal. BCC differs from traditional schemes in that it uses the human body as a wet wire. Thus,

BCC is inherently impenetrable with conventional MITM attack tools, unless the attacker is suspiciously close to the person using a BCC device. Existing BCC literature demonstrates that such attacks are indeed virtually impossible from a distance.

However, previous research has implemented BCC with custom devices, which may be difficult to acquire from the end users perspective. In this study, we implement the BCC transmitter with the Arduino Uno board and the BCC receiver with a smartphone

readily available from the market.

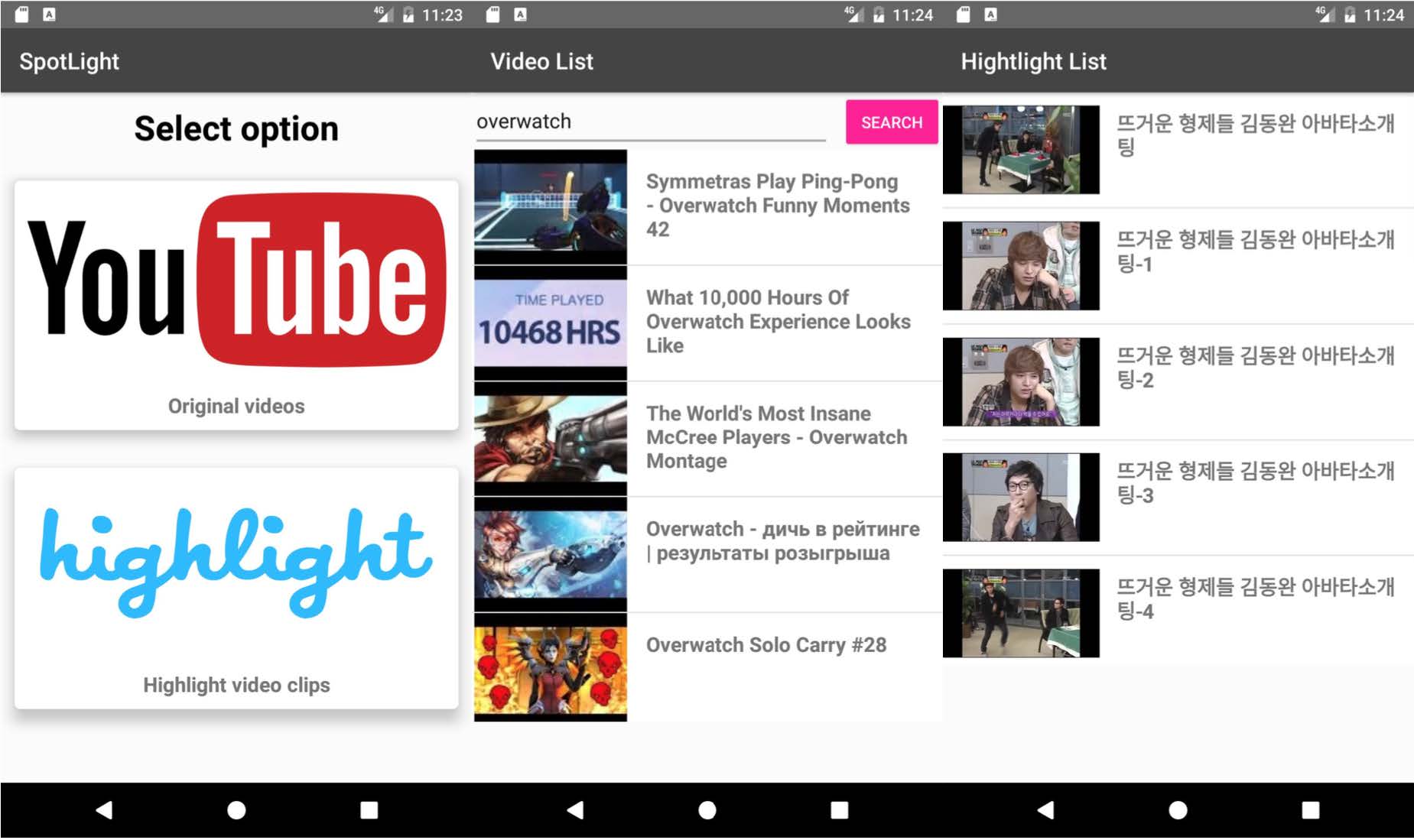

SpotLight: Extracting Video Highlight Based on Human Reaction

HyoungSeok Kim, Hyunsung Cho, and Jaewon Jung

Spring 2017

This report presents SpotLight, a system that extracts and generates video highlights based on viewers' reaction on the video. Previous works either rely on numerous sensors to collect user reaction, or requires viewers' manual participation

along the video. SpotLight uses camera to capture viewers' facial expression and face analyzer to extract useful information from the facial expression. In addition, SpotLight generates video highlights from the analyzed data,

by processing data through a simple heuristic that is tuned from user study. As a result, we found that it is possible to automatically generate video highlights without hurting usability, and those highlights were meaningful

as a highlight.

MulFin: Enriching Touch Experience by Leveraging Multiple Fingers

Taesik Gong, Youngsoo Jang, and Juhee Lee

Spring 2017

With it being more than ten years since smart devices have born, touch screen is one of the most distinctive interfaces to communicate between a human and devices. Even though touch screen is a main interface to give inputs to a device,

current touch screen considers all of touches from different fingers as the same touch input. As a result, there have been many cumbersome touch types such as long touch, double touch, 3D touch, etc., which is difficult to discriminate between them.

In this paper, we try to solve this limitation on current touch screen by identifying each finger as different input and present MulFin. Specifically, MulFin interprets your touch with thumb as touch type one, while interprets your touch with index finger

as touch type two, and so on. MulFin enriches touch experience by differentiating each finger and providing different functions for them. We design MulFin on commodity smartphone and implement a demo drawing application to show one of the use

cases of our system. Also, we evaluate our system in various aspects including identifying accuracy and latency, which shows the feasibility of such system to become practical.

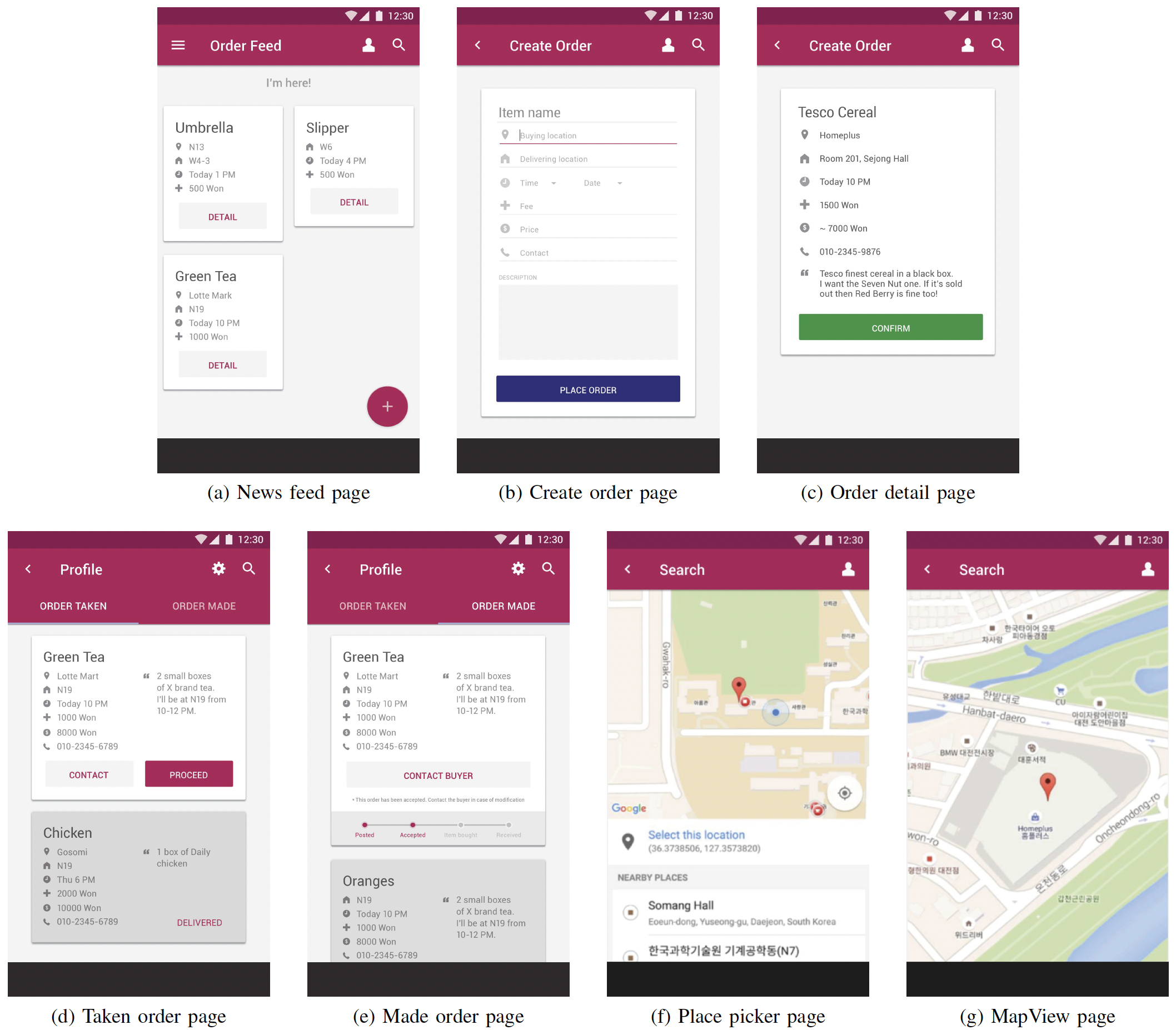

OnTheWay: A New Platform for Local Delivery Service

Chayanin Wong, Jinnapat Indrapiromkul, and Duong Nguyen

Spring 2016

People in one community tend to have same set of frequently visited places. However, due to growth in modern community, connection among members tend to be rather weak. One might want to buy some item from a specific place where another

community member is returning from, meaning that he could have fetched that item and give it to the one who demands it. Convenience and energy efficiency could have increased had these two geographical community members communicated. Other

previous works has attempted to solve this problem in explicit delivering service. In this paper, we proposed an application called OnTheWay which focuses on people at one location to pick up and deliver stuff on the way to their destination with

economic incentive. We implemented OnTheWay on Android for the front-end application and Java web application for the back-end server. Our evaluation shows that users were satisfied with our application although lack of user evaluation gave users

uncomfortable experience in doing transaction.

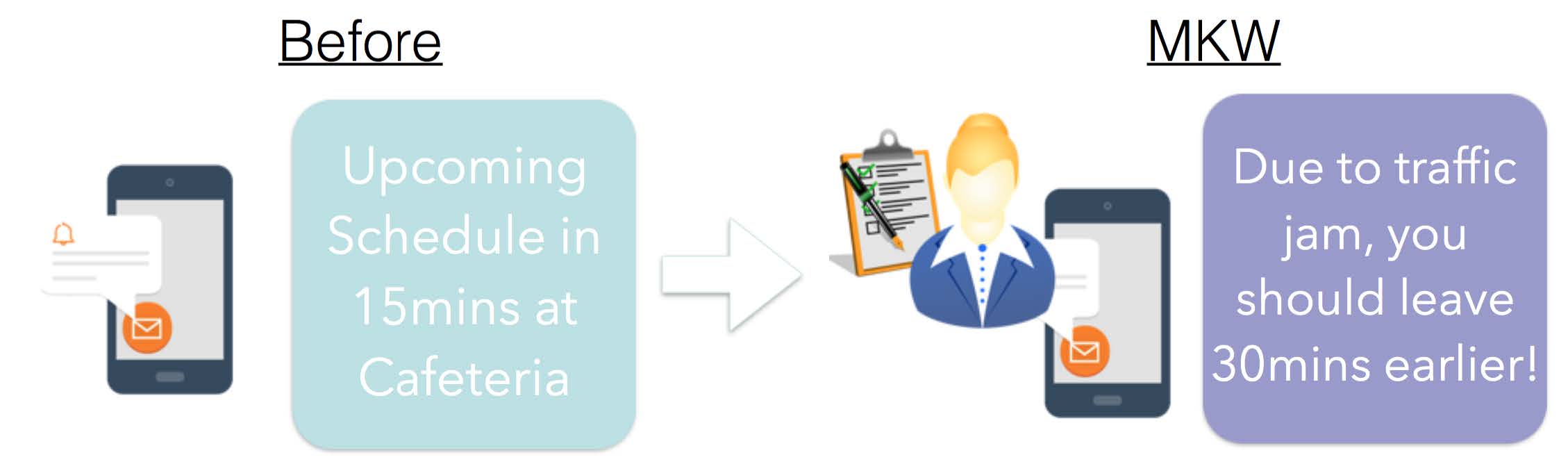

MKW: Accurate Schedule Alarming Based on User Profiling

Soya Park, Soowon Kang, and Hawoon An

Spring 2016

Time management applications' implementations vary vastly, but commonly used methods are schedulers. Scheduler lets us keep track of where the next event is happening and when to prepare and leave. However, it is not good

enough to help us be punctual. Current schedulers only provide flat schedule alarms ahead of time, constant length of time predetermined by the user. The problem with this is that when

(1) the user happens to move to another place, (2) happens to choose another means of transportation or (3) there's an unexpected change in traffic conditions, the alarm provided by the schedulers are no longer accurate.

Motivated by this, we explore the prospect of using mobile phone's sensors to capture user's behavioral characteristics and provide more accurate schedule alarms prior to the user's schedule.

We demonstrate that our application "Mommy Knows When" delivers highly accurate time scheduling for the user based on their characteristics and means of transportation, in order to arrive on the next event's location punctually.

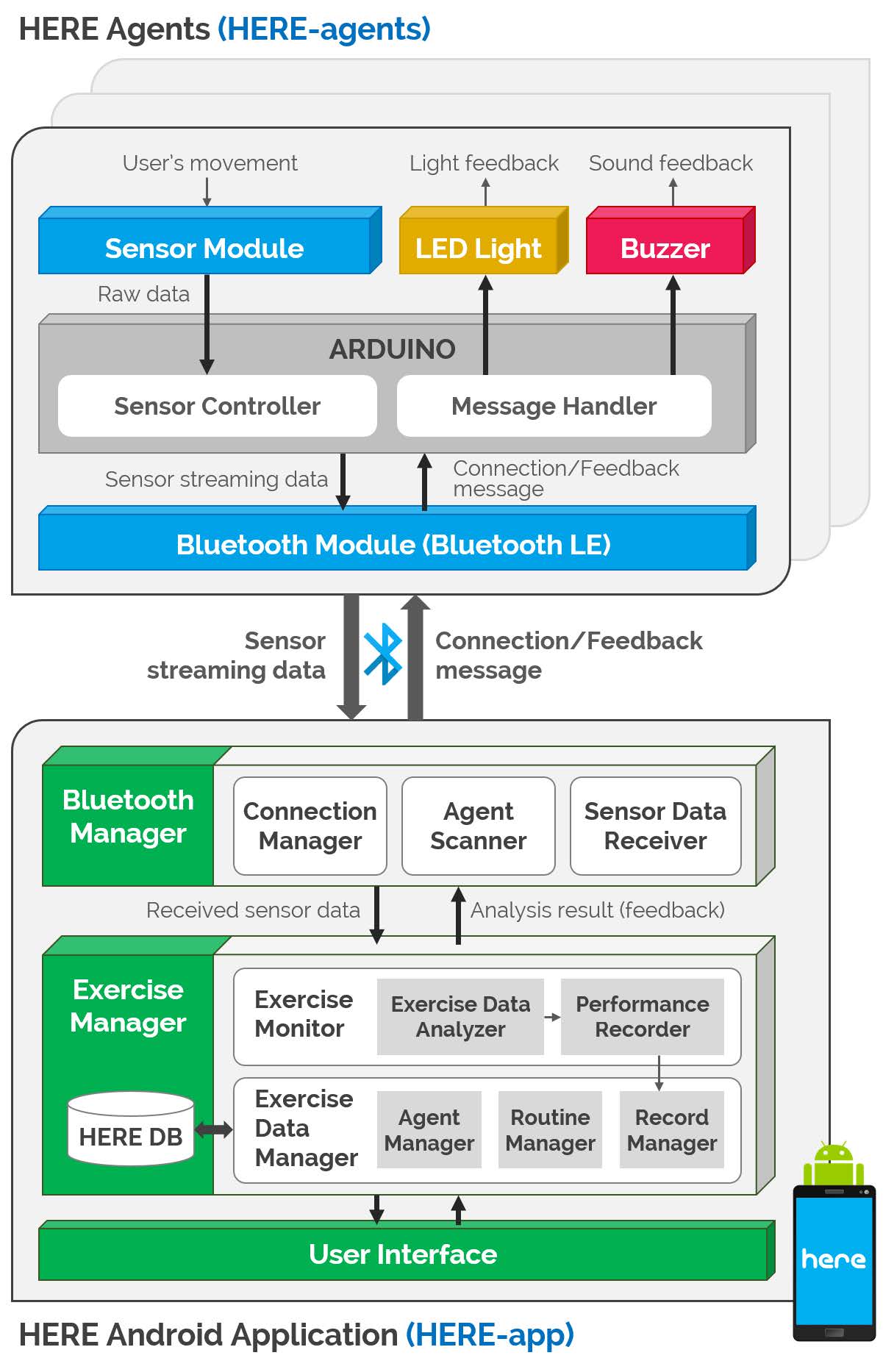

HERE: Home Exercise Reminder via Real-time Sensing of Exercise Equipments

Sunggeun Ahn, Young-Min Baek, and Jiyoung Song

Spring 2016

As the simplest and cheapest way of health care, many people promise to do exercise in their home with some equipments, such as dumbbells, pushup bars. However, in the majority of cases, the home exercise equipments are

not used neither regularly nor effectively by their owners. Although the reason why people cannot use them regularly depends on their habits and individual characteristics, three common reasons can be summarized.

First, home fitness equipments are usually invisible. After we return home, we are not motivated by them because they are hanging up on the walls or just placed in our room like furniture. Second, home fitness

equipments do not give us any feedback while we are doing exercise. Therefore, we can be easily bored exercising and we have to do our own fighting. Third, our home exercising activities are not recorded by the equipments,

so we cannot set and manage our exercise goals and progress systematically. Despite such problems, there is no proper service to make home exercising experience more enjoyable. To address those issues, we propose HERE

(Home Exercise REminder), which is a system to improve home exercising experience. For automated analysis of user's movement, HERE uses equipment-mounted sensors to monitor the real-time values.

HERE utilizes several sensor data from inertial measurement, and HERE Android app profiles the sensor data to extract meaningful events and to provide real-time feedback. Our demonstration with three representative home

fitness equipments shows that HERE can analyze the exercise data with a reasonable accuracy. Furthermore, home exercising with HERE can help to boost interest by real-time feedback and effective exercise management.

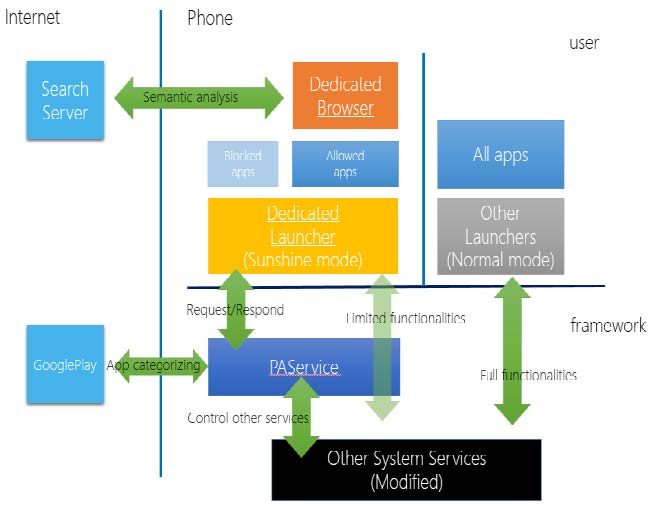

Sunshine: The Service for YOU to eNhance Self-management Helpfully and Intelligently from Now to forEver

Bohun Seo, Hayeon Lee, and Insu Jang

Spring 2016

In recent days, as smartphones came into wide use, many people use smartphones all the time and are disturbed by smartphones, students cannot focus on their study, employee cannot concentrate on their job,

and so on. There are smartphone apps that help people solve this problem, however, they are not flexible and inefficient to get the effect. We suggest SUNSHINE, which runs as a part of Android system.

It provides natural app control experience and automatically filters out the Internet web contents by using semantic analysis. Also, it adopts gamification concept to give users a motivation to keep using SUNSHINE.

TapSnoop: Leveraging Tap Sound to Infer Tapstrokes on Mobile Touch-screen Devices

Hyosu Kim and Byunggill Joe

Fall 2015

We propose a novel tapstroke inference attack method, called TapSnoop, that enables attackers to accurately and robustly infer user typed value on a on-screen keyboard.

Inferring tapstrokes is challenging due to 1) low stroke intensity, 2) dense key arrangement of keys, and 3) ambient noise. We address these challenges by revealing the unique acoustic characteristics

of tapsounds in the time and frequency domain which TapSnoop exploit as a side channel of tapstrokes. For accurate tapstroke inference, we develop tap detection and inference algorithms that

leverages the frequency characteristic of tapsound. In particular, we introduce two acoustic features, Low Frequency Spectrogram and Warped Spectrogram, and apply them to our tap detection

and inference algorithm, respectively. Moreover, with the combined use of sensors, we further improve the accuracy in the presence of ambient noise, by developing the noise cancellation algorithm.

Extensive experiments by collecting 10 real-world users' tapstrokes in various conditions and environments show that TapSnoop can achieve 91.5% and 80.6% average inference accuracy for each type

of keyboard in stable environments. TapSnoop also can achieve a high degree of accuracy by up to 97.3% and 92.4% even with a moderate level (e.g., 60 dB) of noise.

Washer Browser: Real-Time Washer State Tracking System

Byeong Eui Jang and Sera Lee

Fall 2015

Students who live in dormitory use public washers when they do laundry. There are some washers in laundry room at the dormitory. However, sometimes, all washers are already

occupied when they bring their laundry to there, so they should wait until an available washer is appeared. Also when they go to laundry room to take finished laundry, someone put the laundry

out for another laundry. These problems are caused by unawareness of the state of the washers in real-time. Therefore, we made Washer Browser System that analyze washers state in

real-time using machine's vibration pattern, and provide it through a mobile application. Washer Browser can find available and near washers, and also notify to user when the target washer is finished.

NFC PC LOCKER: User your smartphone to lock PC

Hoon Kim and Patrick Langer

Fall 2015

NFC PC Locker is an Android application that can unlock the computer using NFC (Near Field Communication) on smartphones. By utilizing many sensors on the smartphone such as fingerprint sensors with NFC,

this app achieves higher level of security to protect your computer. Furthermore, instead of typing long passwords or forgetting them, it will be much more convenient to just tap your smartphone to your computer.

The NFC PC Locker implements additional techniques differing on user's preference such as Secured Password Sharing(SPS).

MilliCat: Improving Text-based Mobile Communication With Images As First-class Citizens

Chia-Wei Wu and Alvin Chiang

Spring 2015

We present a system for augmenting text chats with images pulled from the Internet in a real-time manner. Users are presented with image suggestions that can be chosen to

replace typed text. We discuss the issue of how to present these suggestions, on what basis to retrieve the proper images and how to decrease the amount of latency so as to allow for a fluid user

interaction.

Hak-yo: Notification System about Friends Nearby

Chunjong Park and Junsung Lim

Spring 2015

Smartphones keep us connected online. We are sometimes too profoundly concentrated on our smartphones that we often fail to engage with people who are physically around us. Hak-yo is an Android app that notifies user about

acquaintances nearby so that the user can take a moment away from the smartphone and actually engage in offline social interaction. Regardless of user's location, Hak-yo sends an Android push notification to the user.

A user can change Hak-yo notification settings should she wish not to get notified at certain time of the day. Lastly, but not least, the user can also check relative proximity of a friend through the app.

Urban Facility Monitoring Platform Design and Implementation

Yoon-Pyo Koo and Deok-Jae Song

Spring 2015

We present a public facility monitoring service for the users of the mobile device. An ultrasonic sensor is placed at each selected facility item to sense whether it is occupied or not. The sensing signal passes through the

relay and the server, and then finally to the mobile client. In this way, the real-time usage states of facilities in a building are provided to the mobile users. For now, the restroom is the mainly considered public facility

for our system, but it can be extended to support other kind of facilities too, such as parking lot or other kind of personal resting places, etc.

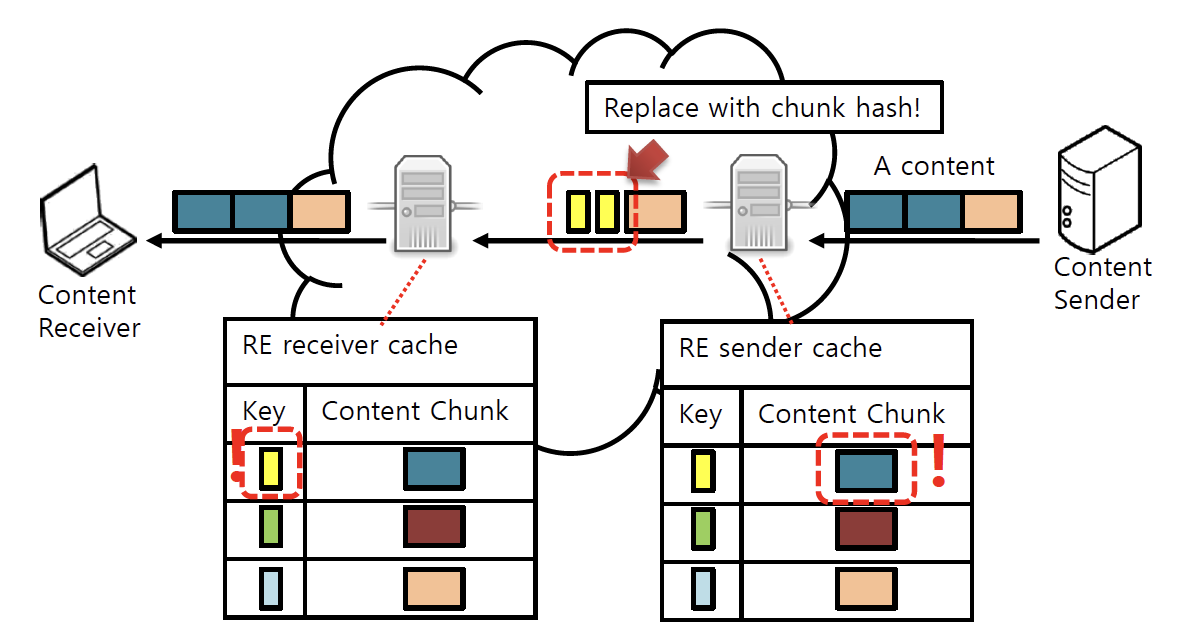

Adaptive RE for Dynamic Mobile Network Environments

Kilho Lee and DaeLyong Jeong

Spring 2015

Procotol-agnostic Redundancy Elimination (RE) has been widely used and successfully improves the network performance. RE could be applied to mobile networks, then it can be a great solution for congested mobile networks against rapid

growth of mobile traffic. However changing mobile environments brings new challenges to enable mobile RE and to make it efficient. In this project, we propose an RE technique which adapts to the changing mobile environments, and validate the

effectiveness of the proposed technique with simulation experiments. The simulation results show the adaptive RE can improve network transmission time by 1-4%, under dynamically changing network conditions.

Knocky: An Experience-Sharing Music Player

Sunwoo Kim and Seungchul Lee

Spring 2015

Music becomes one of the most impacting resource for modern people. In addition, emerging IT devices and services give us huge opportunities to share our belongings with other people. In this regard, we propose Knocky,

an experience-sharing music player. Underlying knock event detection and wireless music coordination techniques enable spreading their music experiences to other people. With an analysis of knock detection algorithm,

we show that Knocky has a reasonable knock detection quality.